ECHELON Software Takes on Billion Cell

See how ECHELON enables the modeling and simulation of giant conventional reservoirs.

See how ECHELON enables the modeling and simulation of giant conventional reservoirs.

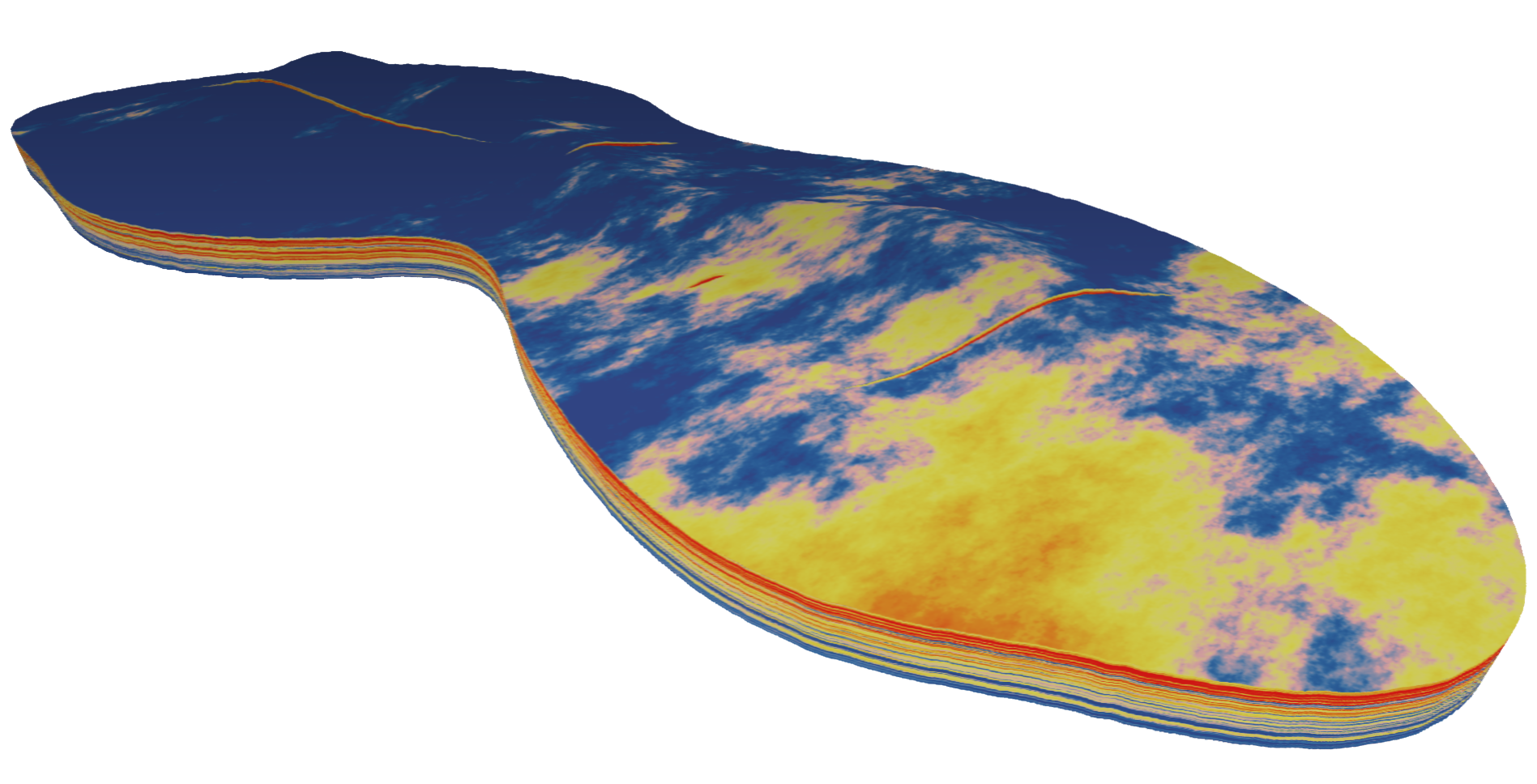

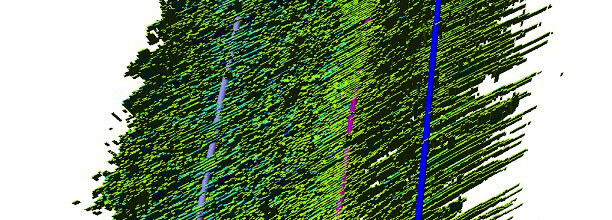

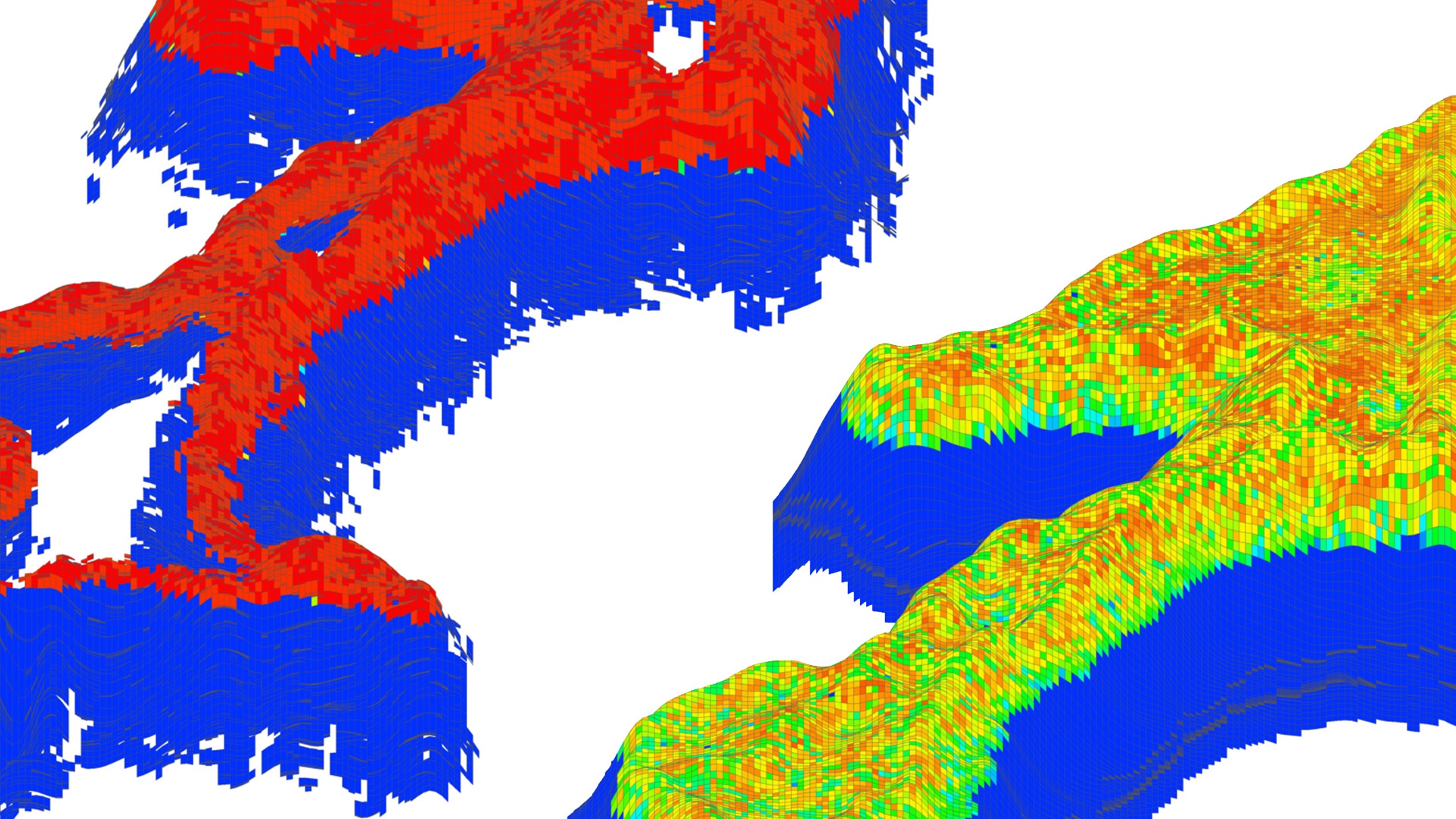

For the vast majority of simulation cases a billion cells is several orders of magnitude larger than is commonly used. At this "hero-scale" the main goal is to stress-test simulators and to show off capability. Typical reservoir models used in the industry range in size from a few hundred thousand to a few million cells. If we think of one of these models as a standard definition HDTV, then the billion-cell example would have 250 times the resolution of a 4K television. It gives enormous resolution and clarity, but comes with high computational cost. The purpose of our billion-cell calculation was to highlight ECHELON's capabilities and the efficiencies that GPU computing offers. With help from colleagues at iReservoir, we created a model using publicly available log data collected from a large Middle East carbonate field. We built a three-phase model with 1.01 billion cells and 1,056 wells.

ECHELON reservoir simulation software is a massively parallel, fully-implicit, extended black-oil reservoir simulator built from inception to take full advantage of the fine-grained parallelism and massive compute capability offered by modern Graphical Processing Units (GPUs). These GPUs provide a dense computing platform with ultrahigh memory bandwidth and extreme arithmetic throughput. Massively parallel GPU hardware, modern solver algorithms and careful implementation are combined in ECHELON software to enable efficient simulation from hundreds of thousands to billions of cells. This is accomplished at speeds that enable the practical simulation of hundreds of realizations of large complex models in vastly less time, all while using far fewer hardware resources than CPU based solutions. The principle conclusion we draw from our results is that ECHELON software used an order of magnitude fewer server nodes and two orders of magnitude fewer domains to achieve an order of magnitude greater calculation speed than those reported by analogous CPU based codes.

We simulated this model in 92 minutes on 30 IBM POWER8 nodes each with 4 NVIDIA TESLA P100 GPUs. In contrast, previous billion cell calculations used over 500 nodes and took 20 hours. The calculation and the results powerfully illustrate i) the capability of GPUs for large scale physical modeling ii) the performance advantages of GPUs over CPUs and iii) the efficiency and density of solution that GPUs offer.

Deep dive into our software product and its efficacy.

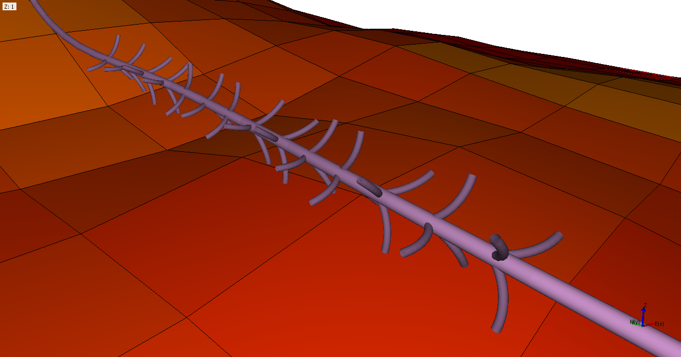

Evaluating the productivity enhancements of Fishbones in fractured carbonate reservoirs using high-performance cloud-based reservoir simulation.

View case study →

Workflow to manage the operational challenges in an unconventional field development requires full field simulation.

View case study →

ECHELON software tackles an extremely difficult compositional model. A demonstration of the robustness of ECHELON software.

View case study →